Related Articles:

Bitcoin Information & Resources (#GotBitcoin?)

Ultimate Resource For News, Breakthroughs And Innovations In Healthcare

Slow-Twitch vs. Fast-Twitch Muscle Fibers

Chrono-Pharmacology Reveals That “When” You Take Your Medication Can Make A Life-Saving Difference

Jarlsberg Cheese Offers Significant Bone & Heart-Health Benefits Thanks To Vitamin K2, Says Study

Ultimate Resource For Cat Lovers

New Evidence For The Vibration Theory Of Smell

Why You Shouldn’t Buy A Low-End Home Security Camera

Ultimate Resource On Commodity Futures Trading Commission (CFTC) And It’s Impact On Bitcoin

Top Institutions And Individuals Trying To Destroy Bitcoin

Ultimate Resource On Online Streaming Services

Large Chinese Bank Protest Put Down With Violence (#GotBitcoin)

The NASA Engineer Who Made The James Webb Space Telescope Work

Ultimate Resource On Brittney Griner Being Held In Russian Jail

Workplace Hazards: What Are The Risks Surrounding Electricians?

“Better Days Ahead With Crypto Deleveraging Coming To An End” — Joker

What Happens When You Put Regular Gas In a Premium Car?

Amazon Prime Rewards “5% Back” Visa Signature Card Review

When World’s Central Banks Get It Wrong, Guess Who Pays The Price???????????? (#GotBitcoin)

Crypto Market Is Closer To A Bottom Than Stocks (#GotBitcoin)

Axon Ditches Plans For Weaponized Taser Drones As Majority Of Ethics Board Resigns

Israeli Tech Firm Rolls Out Tracking Devices The Size Of Postage Stamps

Gas Station Owner In Massachusetts Shuts Pumps To Protest Prices

Fed Money Printer Goes Into Reverse (Quantitative Tightening): What Does It Mean For Crypto?

Mocked As ‘Rubble’ By Biden, Russia’s Ruble Roars Back

Anti-ESG Movement Reveals How Blackrock Pulls-off World’s Largest Ponzi Scheme

$35 TRILLION Global Stock Market Meltdown!!! (#GotBitcoin)

Citi Trader Is Scapegoat For Flash Crash In Europe Stocks (#GotBitcoin)

Building And Running Businesses In The ‘Spirit Of Bitcoin’

Will Community Group Buying Work In The US?

Sri Lanka’s And Lebanon’s Defaults Could Be The First Of Many (#GotBitcoin)

Ultimate Resource On Bitcoin Price Manipulation By Wall Street

Ultimate Resource On Russians Oligarchs And The Impact Of Sanctions On Them

Ultimate Resource For The Crisis Taking Place In The Nickel Market

Does Your Doctor or Hospital Have A Financial Relationship With Big Pharma?

Former World Bank Chief Didn’t Act On Warnings Of Sexual Harassment

AI Experts Warn of Potential Cyberwar Facing Banking Sector vs Bitcoin Which Can Be Stored Off-line

Archaeologists Uncover Five Tombs In Egypt’s Saqqara Necropolis

What The Fed’s Rate Hike Means For Inflation, Housing, Crypto And Stocks

Unusual Side Hustles You May Not Have Thought Of

Happy International Women’s Day! Leaders Share Their Experiences In Crypto

Russia’s Independent Journalists Including Those Who Revealed The Pandora Papers Need Your Help

The Miracle Of Blockchain’s Triple Entry Accounting

Catawba, Native-American Tribe Approves First Digital Economic Zone In The United States

Ultimate Resource On Music And NFTs And The Implications For The Entertainment Industry

Traders Prefer Gold, Fiat Safe Havens Over Bitcoin As Russia Goes To War

Ultimate Resource On Music Catalog Deals

Why We Should Welcome Another Crypto Winter

Canada’s Major Banks Go Offline In Mysterious (Bank Run?) Hours-Long Outage (#GotBitcoin)

The Ultimate Resource For The Bitcoin Miner And The Mining Industry (Page#2) #GotBitcoin

Investors Pool Funds To Help Poor People Sue Big Companies

100 Million Americans Can Legally Bet on the Super Bowl. A Spot Bitcoin ETF? Forget About it!

‘I Cry Every Day’: Olympic Athletes Slam Food, COVID Tests And Conditions In Beijing

Ultimate Resource On Myanmar’s Involvement With Crypto-Currencies

Bitcoin For Corporations | Michael Saylor | Bitcoin Corporate Strategy

Japan’s $1 Trillion Crypto Market May Ease Onerous Listing Rules

How Bitcoin Contributions Funded A $1.4M Solar Installation In Zimbabwe

BREAKING: Arizona State Senator Introduces Bill To Make Bitcoin Legal Tender

Vast Troves of Classified Info Undermine National Security, Spy Chief Says

Inflation And A Tale of Cantillionaires

America COMPETES Act Would Be Disastrous For Bitcoin Cryptocurrency And More

Petition Calling For Resignation Of U.S. Securities/Exchange Commission Chair Gary Gensler

McDonald’s Jumps On Bitcoin Memewagon, Crypto Twitter Responds

Smart Money Is Buying Bitcoin Dip. Stocks, Not So Much

Henrietta Lacks And Her Remarkable Cells Will Finally See Some Payback

Stealing The Blood Of The Young May Make You More Youthful

Doctors Show Implicit Bias Towards Black Patients

Indexing Is Coming To Crypto Funds Via Decentralized Exchanges

Imagine There’s No Bitcoin. If You Can, Then We Haven’t Done Our Jobs

US Stocks Historically Deliver Strong Gains In Fed Hike Cycles (GotBitcoin)

Federal Regulator Says Credit Unions Can Partner With Crypto Providers

Jack Dorsey Announces Bitcoin Legal Defense Fund

Four Ways Black Families Can Fight Against Rising Inflation (#GotBitcoin)

Bitcoin’s Dominance of Crypto Payments Is Starting To Erode

Walmart Filings Reveal Plans To Create Cryptocurrency, NFTs

Ultimate Resource On Duke of York’s Prince Andrew And His Sex Scandal

FDA Approves First-Ever Arthritis Pain Management Drug For Cats

Is Art Therapy The Path To Mental Well-Being?

Arkansas Tries A New Strategy To Lure Tech Workers: Free Bitcoin

Wordle Is The New Lingo Turning Fans Into Argumentative Strategy Nerds

Nas Selling Rights To Two Songs Via Crypto Music Startup Royal

Teen Cyber Prodigy Stumbled Onto Flaw Letting Him Hijack Teslas

Joe Rogan: I Have A Lot Of Hope For Bitcoin

How Black Businesses Can Prosper From Targeting A Trillion-Dollar Black Culture Market (#GotBitcoin)

‘Yellowstone’ Is A Huge Hit That Started With Small-Town Fans

Sidney Poitier, Actor Who Made Oscars History, Dies At 94

How Jessica Simpson Almost Lost Her Name And Her Billion Dollar Empire

Ultimate Resource On Solana Outages And DDoS Attacks

Ultimate Resource On Kazakhstan As Second In Bitcoin Mining Hash Rate In The World After US

Nasdaq-Listed Blockchain Firm BTCS To Offer Dividend In Bitcoin; Shares Surge

Bitcoin Enthusiast And CEO Brian Armstrong Buys Los Angeles Home For $133 Million

Ultimate Resource On A Strong Dollar’s Impact On Bitcoin

Ultimate Resource On Bitcoin Unit Bias

Yosemite Is Forcing Native American Homeowners To Leave Without Compensation. Here’s Why

Raoul Pal Believes Institutions Have Finished Taking Profits As Year Winds Up

10 Women Who Used Crypto To Make A Difference In 2021

Disposable Masks That Don’t Pollute

Move Over, Tennis And Golf. Networks And Brands Are Cashing In On Pickleball

Athletes Unlimited Signs Big Sponsor In Boost For Women’s Sports

Grounded By The Pandemic? Donate Your Unused Points And Miles

Crypto And Its Many Fees: What To Know About The Hidden Costs Of Digital Currency

Deputizing Blockchain To Fight Corrupt Governments. Also America Blows It’s Tokenization Advantage

Crypto Attracts More Money In 2021 Than All Previous Years Combined

The US Shouldn’t Be Afraid of China

Ultimate Resource On Donald J. Trump

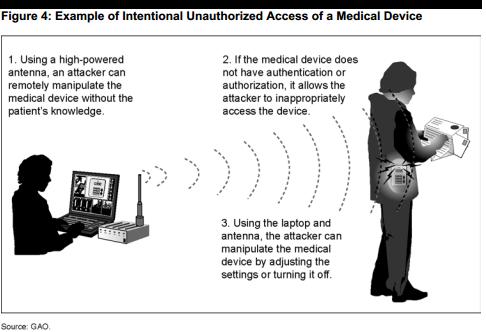

Ubiquitous Surveillance And Security

Finding Your Superpower In A Higher-Priced World With On-The-Spot Comparison Shopping

How remanufacturing is combating global warming

Shoppers Lined Up At Dawn For The ‘Open Run’ On $9,500 Chanel Bags

How Banks Win When Interest Rates Rise (#GotBitcoin)

Crypto, NFTs And Tungsten Cubes: A Guide To Giving Cash In 2021

Tech Giants Apple, Microsoft, Amazon And Others Warn of Widespread Software Flaw

Ultimate Resource On Web3 And Crypto’s Attempt To Reinvent The Internet

Bitcoin Core Developer Samuel Dobson Decides It Is ‘Time To Go’

The Biden Economy Is Actually Pretty Good

Live: House Memo Details Congress’ Priorities Ahead Of Crypto CEO Hearing

Verizon is Tracking iPhone Users by Default And There’s Nothing Apple Can Do. How to Turn It Off

A Plant-based Pet Food Frenzy Is On The Horizon

What Is Cryptocurrency, And How Does It Work?

One Of The Weirdest Reports: Investors React To 12-3-2021 Jobs Data (#GotBitcoin)

Ultimate Resource On China’s ‘Common Prosperity’ Drive How It Plans To Redistribute The Wealth

Note To Brands: Crypto Isn’t Funny Money. It’s Community

How Crypto Vigilantes Are Hunting Scams In A $100 Billion Market

6 Questions For Lyn Alden Schwartzer Of Lyn Alden Investment Strategy

Bitcoin Offers Little Refuge From Covid Market Rout

The Anti-Work Brigade Targets Amazon On Black Friday

Reddit’s Latest Money-Making Obsession Is An Obscure Fed Facility (Reverse Repo or RRP)

Federal Reserve To Taper Money Printing That Fueled Bitcoin Rally

Apple Sues NSO Group To Curb The Abuse Of State-Sponsored Spyware

Did You Buy Your Cat or Dog A Christmas Present? Join The Club

Gen Z Has $360 Billion To Spend, Trick Is Getting Them To Buy (#GotBitcoin)

10 Fundamental Rights For All Crypto Users Including Responsibilities For The Industry

Texas Plans To Become The Bitcoin Capital, Vulnerable Power Grid And All

Bitcoin Caught Between Longer-Term Buyers, Leveraged Speculators

Outcry Grows As China Breaks Silence On Missing Tennis Star

China Left In Shock Following Brutal Killing Of Corgi During Covid-19 Disinfection

Biden Orders Feds To Tackle ‘Epidemic’ Of Missing Or Murdered Indigenous People

An Antidote To Inflation? ‘Buy Nothing’ Groups Gain Popularity

Ultimate Resource For Cooks, Chefs And The Latest Food Trends

Open Enrollment Gameplan: How To Compare Your Partner’s Health Insurance With Yours

Millions Of Americans Are Skipping The Dentist. Lenders See A Financing Niche

Matt Damon To Promote Crypto.Com In Race To Lure New Users

US General Likens China’s Hypersonic Missile Test To A ‘Sputnik Moment’

The Key To Tracking Corona And Other Any Virus Could Be Your Poo

How To Evade A Chinese Bitcoin Ban

US Government Failed To Spot Pedophile At Indian Health Service Hospitals

Netflix Defends Dave Chappelle While Seeing “Squid Game” Become It’s Biggest Hit Ever

Ultimate Resource On Various Countries Adopting Bitcoin

Ultimate Resource On Bitcoin Billionaires

Stripe Re-Enters Processing Payments Using Cryptos 3 Years After Dropping Bitcoin

Why Is My Cat Rubbing His Face In Ants?

Pets Score Company Perks As The ‘New Dependents’

What Pet Owners Should Know About Chronic Kidney Disease In Dogs And Cats

The Facebook Whistleblower, Frances Haugen, Says She Wants To Fix The Company, Not Harm It

Walk-in Cryptocurrency Exchanges Emerge Amid Bitcoin Boom

Europe’s Giant Job-Saving Experiment Pays Off In Pandemic

To Survive The Pandemic, Entrepreneurs Might Try Learning From Nature

Rise of The FinFluencer And How They Target Young And Inexperienced Investors

Hyperinflation Concerns Top The Worry List For UBS Clients

Sotheby’s Opens First-Ever Exhibition of Black Jewelry Designers

America And Europe Face Bleak Winter As Energy Prices Surge To Record Levels

Ultimate Resource On Vaccine Boosters

Unvaccinated COVID-19 Hospitalizations Cost The U.S. Health System Billions Of Dollars

Wallets Are Over. Your Phone Is Your Everything Now

Crypto Mining Demand Soars In Vietnam Amid Bitcoin Rally

How The Supreme Court Texas Abortion Ruling Spurred A Wave Of ‘Rage Giving’

Ultimate Resource On Global Inflation And Rising Interest Rates (#GotBitcoin)

Presearch Decentralized Crypto-Powered Search Engine

How To Make Vietnam A Powerful Trade Ally For The U.S.

Banking Could Go The Way of News Publishing (#GotBitcoin)

Operation “Choke Point”: An Aggressive FTC And The Response of The Payment Systems Industry

Bezos-Backed Fusion Startup Picks U.K. To Build First Plant

TikTok Is The Place To Go For Financial Advice If You’re A Young Adult

More Companies Weigh Penalizing Employees Without Covid-19 Vaccinations

Crypto Firms Want Fed Payment Systems Access—And Banks Are Resisting

Travel Expert Oneika Raymond’s Favorite Movies And Shows

How To Travel Luxuriously In The Summer Of Covid-19, From Private Jets To Hotel Buyouts

Nurses Travel From Coronavirus Hot Spot To Hot Spot, From New York To Texas

Travel Is Bouncing Back From Coronavirus, But Tourists Told To Stick Close To Home

Tricks For Making A Vacation Feel Longer—And More Fulfilling

Who Is A Perpetual Traveler (AKA Digital Nomad) Under The US Tax Code

Four Stories Of How People Traveled During Covid

Director Barry Jenkins Is The Travel Nerd’s Travel Nerd

Why Is My Cat Rubbing His Face In Ants?

Natural Cure For Hyperthyroidism In Cats Including How To Switch Him/Her To A Raw Food Diet

A Paycheck In Crypto? There Might Be Some Headaches

About 46 Million Americans Or 17% Now Own Bitcoin vs 50% Who Own Stocks

Josephine Baker Is 1st Black Woman Given Paris Burial Honor

Cryptocurrency’s Surge Leaves Global Watchdogs Trying To Catch Up

It Could Just Be The U.S. Is Not The Center Of The Crypto Universe

Blue Turmeric Is The Latest Super Spice To Shake Up Pantry Shelves

Vice President Kamala Harris To Focus On Countering China On Southeast Asia Trip

The Vaccinated Are Worried And Scientists Don’t Have Answers

Silver Lining of Coronavirus, Return of Animals, Clear Skies, Quiet Streets And Tranquil Shores

Cocoa Cartel Stirs Up Global Chocolate Market With Increased Use Of Child Labor

Killer Whale Dies Suddenly At SeaWorld San Diego

Does Getting Stoned Help You Get Toned? Gym Rats Embrace Marijuana

Hope Wiseman Is The Youngest Black Woman Dispensary Owner In The United States

What Sex Workers Want To Do With Bitcoin

How A Video Résumé Can Get You Hired In The Covid-19 Job Market

US Lawmakers Urge CFTC And SEC To Form Joint Working Group On Digital Assets

Bitcoin’s Surge Lacks Extreme Leverage That Powered Past Rallies

JP Morgan Says, “Proof-Of-Stake Will Eat Proof-Of-Work For Breakfast — Here’s Why

Ranking The Currencies That Could Unseat The Dollar (#GotBitcoin)

The Botanist Daring To Ask: What If Plants Have Intelligence?

After A Year Without Rowdy Tourists, European Cities Want To Keep It That Way

Prospering In The Pandemic, Some Feel Financial Guilt And Gratitude

The Secret Club For Billionaires Who Care About Climate Change

A List Of Relief Funds For Restaurants, Bars, And Food Service Workers

Bill And Melinda Gates Welcome The Philanthropists Of The Future

CO2-Capture Plan Using Old Oil Reservoirs In Denmark Moves Ahead

Could This Be The Digital Nose Of The Future?

Bitcoin Fans Are Suddenly A Political Force

Walmart Seeks Crypto Product Lead To Drive Digital Currency Strategy

Music Distributor DistroKid Raises Money At $1.3 Billion Valuation

Books To Read, Foods To Eat, Movies To Watch, Exercises To Do And More During Covid19 Lockdown

Hacker Claims To Steal Data Of 100 Million T-Mobile Customers

Is The Cryptocurrency Epicenter Moving Away From East Asia?

Vietnam Leads Crypto Adoption In Finder’s 27-Country Survey

Bitcoin’s Latest Surge Lacks Extreme Leverage That Powered Past Rallies

Creatine Supplementation And Brain Health

Nigerian State Says 337 Students Missing After Gunmen Attack

America’s 690 Mile-Long Yard Sale Entices A Nation of Deal Hunters

Russian Economy Grew (10.3% GDP) Fastest Since 2000 On Lockdown Rebound

US Troops Going Hungry (Food Insecurity) Is A National Disgrace

Overheated, Unprepared And Under-protected: Climate Change Is Killing People, Pets And Crops

Accenture Confirms Hack After LockBit Ransomware Data Leak Threats

Climate Change Prompts These Six Pests To Come And Eat Your Crops

Leaked EU Plan To Green Its Timber Industry Sparks Firestorm

Students Take To The Streets For Day Of Action On Climate Change

Living In Puerto Rico, Where The Taxes Are Low And Crypto Thrives

Bitcoin Community Leaders Join Longevity Movement

Some Climate Change Effects May Be Irreversible, U.N. Panel Says

Israel’s Mossad Intelligence Agency Is Seeking To Hire A Crypto Expert

What You Should Know About ‘529’ Education-Savings Accounts

Bitcoin Doesn’t Need Presidents, But Presidents Need Bitcoin

Plant-Based Fish Is Rattling The Multibillion-Dollar Seafood Industry

The Scientific Thrill Of The Charcoal Grill

Escaping The Efficiency Trap—and Finding Some Peace of Mind

Sotheby’s Selling Exhibition Celebrates 21 Black Jewelry Designers

Marketers Plan Giveaways For Covid-19 Vaccine Recipients

Haiti’s President Moise Assassinated In Night Attack On His Home

The Argentine River That Carries Soybeans To World Is Drying Up

A Harvard Deal Tries To Break The Charmed Circle Of White Wealth

China Three-Child Policy Aims To Rejuvenate Aging Population

California Wants Its Salton Sea Located In The Imperial Valley To Be ‘Lithium Valley’

Who Will Win The Metaverse? Not Mark Zuckerberg or Facebook

The Biggest Challenge For Crypto Exchanges Is Global Price Fragmentation

London Block Exchange – LBX Buy, Sell & Trade Cryptocurrencies?

Chicago World’s Fair Of Money To Unveil A Private Coin Collection Worth Millions of Dollars

Bitcoin Dominance On The Rise Once Again As Crypto Market Rallies

Uruguayan Senator Introduces Bill To Enable Use Of Crypto For Payments

Talen Energy Investors Await Update From The Top After Pivot To Crypto

Ultimate Resource On Hydrogen And Green Hydrogen As Alternative Energy

Covid Made The Chief Medical Officer A C-Suite Must

When Will Stocks Drop? Watch Profit Margins (And Get Nervous)

Behind The Rise Of U.S. Solar Power, A Mountain of Chinese Coal

‘Buy Now, Pay Later’ Installment Plans Are Having A Moment AgainUS Crypto Traders Evade Offshore Exchange Bans

5 Easy Ways Crypto Investors Can Make Money Without Needing To Trade

What’s A ‘Pingdemic’ And Why Is The U.K. Having One?

“Crypto-Property:” Ohio Court Says Crypto-Currency Is Personal Property Under Homeowners’ Policy

Retinal And/Or Brain Photon Emissions

Antibiotic Makers Concede In The War On Superbugs (#GotColloidalSilver)

What It Takes To Reconnect Black Communities Torn Apart by Highways

Those Probiotics May Actually Be Hurting Your ‘Gut Health’ We’re Not Prepared To Live In This Surveillance Society

How To Create NFTs On The Bitcoin Blockchain

World Health Organization Forced Valium Into Israeli And Palestinian Water Supply

Blockchain Fail-Safes In Space: Spacechain, Blockstream And Cryptosat

What Is A Digital Nomad And How Do You Become One?

Flying Private Is Cheaper Than You Think — Here Are 6 Airlines To Consider For Your Next Flight

What Hackers Can Learn About You From Your Social-Media Profile

How To Protect Your Online Privacy While Working From Home

Rising Diaper Prices Prompt States To Get Behind Push To Pay

Want To Invest In Cybersecurity? Here Are Some ETFs To Consider

US Drops Visa Fraud Cases Against Five Chinese Researchers

Risks To Great Barrier Reef Could Thwart Tycoon’s Coal Plans

The Super Rich Are Choosing Singapore As The World’s Safest Haven

Jack Dorsey Advocates Ending Police Brutality In Nigeria Through Bitcoin

Psychedelics Replace Pot As The New Favorite Edgy Investment

Who Gets How Much: Big Questions About Reparations For Slavery

US City To Pay Reparations To African-American Community With Tax On Marijuana Sales

Crypto-Friendly Investment Search Engine Vincent Raises $6M

Trading Firm Of Richest Crypto Billionaire Reveals Buying ‘A Lot More’ Bitcoin Below $30K

Bitcoin Security Still A Concern For Some Institutional Investors

Weaponizing Blockchain — Vast Potential, But Projects Are Kept Secret

China Is Pumping Money Out Of The US With Bitcoin

Tennessee City Wants To Accept Property Tax Payments In Bitcoin

Currency Experts Say Cryptonotes, Smart Banknotes And Cryptobanknotes Are In Our Future

Housing Insecurity Is Now A Concern In Addition To Food Insecurity

Food Insecurity Driven By Climate Change Has Central Americans Fleeing To The U.S.

Eco Wave Power Global (“EWPG”) Is A Leading Onshore Wave Energy Technology Company

How And Why To Stimulate Your Vagus Nerve!

Green Finance Isn’t Going Where It’s Needed

Shedding Some Light On The Murky World Of ESG Metrics

SEC Targets Greenwashers To Bring Law And Order To ESG

Spike Lee’s TV Ad For Crypto Touts It As New Money For A Diverse World

Bitcoin Network Node Count Sets New All-Time High

Tesla Needs The Bitcoin Lightning Network For Its Autonomous ‘Robotaxi’ Fleet

How To Buy Bitcoin: A Guide To Investing In The Cryptocurrency

How Crypto is Primed To Transform Movie Financing

Paraguayan Lawmakers To Present Bitcoin Bill On July 14

Bitcoin’s Biggest Hack In History: 184.4 Billion Bitcoin From Thin Air

Paul Sztorc On Measuring Decentralization Of Nodes And Blind Merge Mining

Reality Show Is Casting Crypto Users Locked Out Of Their Wallets

EA, Other Videogame Companies Target Mobile Gaming As Pandemic Wanes

Strike To Offer ‘No Fee’ Bitcoin Trading, Taking Aim At Coinbase And Square

Coinbase Reveals Plans For Crypto App Store Amid Global Refocus

Mexico May Not Be Following El Salvador’s Example On Bitcoin… Yet

What The Crypto Crowd Doesn’t Understand About Economics

My Local Crypto Space Just Got Raided By The Feds. You Know The Feds Scared Of Crypto

My Local Crypto Space Just Got Raided By The Feds. You Know The Feds Scared Of Crypto

Bitcoin Slumps Toward Another ‘Crypto Winter’

NYC’s Mayoral Frontrunner Pledges To Turn City Into Bitcoin Hub

Lyn Alden On Bitcoin, Inflation And The Potential Coming Energy Shock

$71B In Crypto Has Reportedly Passed Through ‘Blockchain Island’ Malta Since 2017

Startups Race Microsoft To Find Better Ways To Cool Data Centers

Why PCs Are Turning Into Giant Phones

Panama To Present Crypto-Related Bill In July

Hawaii Had Largest Increase In Demand For Crypto Out Of US States This Year

What To Expect From Bitcoin As A Legal Tender

Petition: Satoshi Nakamoto Should Receive The Nobel Peace Prize

Can Bitcoin Turbo-Charge The Asset Management Industry?

Bitcoin Interest Drops In China Amid Crackdown On Social Media And Miners

Multi-trillion Asset Manager State Street Launches Digital Currency Division

Wall Street’s Crypto Embrace Shows In Crowd At Miami Conference

MIT Bitcoin Experiment Nets 13,000% Windfall For Students Who Held On

Petition: Let’s Make Bitcoin Legal Tender For United States of America

El Salvador Plans Bill To Adopt Bitcoin As Legal Tender

What Is Dollar Cost Averaging Bitcoin?

Paxful Launches Tool Allowing Businesses To Receive Payment In Bitcoin

CEOs Of Top Russian Banks Sberbank And VTB Blast Bitcoin

President of El Salvador Says He’s Submitting Bill To Make Bitcoin Legal Tender

Bitcoin Falls As Weibo Appears To Suspend Some Crypto Accounts

Israel-Gaza Conflict Spurs Bitcoin Donations To Hamas

Bitcoin Bond Launch Brings Digital Currency Step Closer To ‘World Of High Finance’

Worst Month For BTC Price In 10 Years: 5 Things To Watch In Bitcoin

Bitcoin Card Game Bitopoly Launches

Carbon-Neutral Bitcoin Funds Gain Traction As Investors Seek Greener Crypto

Ultimate Resource On The Bitcoin Mining Council

Libertarian Activists Launch Bitcoin Embassy In New Hampshire

Why The Bitcoin Crash Was A Big Win For Cryptocurrencies

Treasury Calls For Crypto Transfers Over $10,000 To Be Reported To IRS

Crypto Traders Can Automate Legal Requests With New DoNotPay Services

Bitcoin Marches Away From Crypto Pack In Show of Resiliency

NBA Top Shot Lawsuit Says Dapper’s NFTs Need SEC Clampdown

Maximalists At The Movies: Bitcoiners Crowdfunding Anti-FUD Documentary Film

Caitlin Long Reveals The ‘Real Reason’ People Are Selling Crypto

Microsoft Quietly Closing Down Azure Blockchain In September

How Much Energy Does Bitcoin Actually Consume?

Bitcoin Should Be Priced In Sats And How Do We Deliver This Message

Bitcoin Loses 6% In An Hour After Tesla Drops Payments Over Carbon Concerns

Crypto Twitter Decodes Why Zuck Really Named His Goats ‘Max’ And ‘Bitcoin’

Bitcoin Pullback Risk Rises As Whales Resume Selling

Thiel-Backed Block.one Injects Billions In Crypto Exchange

Sequoia, Tiger Global Boost Crypto Bet With Start-up Lender Babel

Here’s How To Tell The Difference Between Bitcoin And Ethereum

In Crypto, Sometimes The Best Thing You Can Do Is Nothing

Crypto Community Remembers Hal Finney’s Contributions To Blockchain On His 65Th Birthday

DJ Khaled ft. Nas, JAY-Z & James Fauntleroy And Harmonies Rap Bitcoin Wealth

The Two Big Themes In The Crypto Market Right Now

Crypto Could Still Be In Its Infancy, Says T. Rowe Price’s CEO

Governing Body Of Louisiana Gives Bitcoin Its Nod Of Approval

Sports Athletes Getting Rich From Bitcoin

Behind Bitcoin’s Recent Slide: Imploding Bets And Forced Liquidations

Bad Omen? US Dollar And Bitcoin Are Both Slumping In A Rare Trend

Wall Street Starts To See Weakness Emerge In Bitcoin Charts

Crypto For The Long Term: What’s The Outlook?

Mix of Old, Wrong And Dubious ‘News/FUD’ Scares Rookie Investors, Fuels Crypto Selloff

Wall Street Pays Attention As Bitcoin Market Cap Nears The Valuation Of Google

Bitcoin Price Drops To $52K, Liquidating Almost $10B In Over-Leveraged Longs

Bitcoin Funding Rates Crash To Lowest Levels In 7 Months, Peak Fear?

Investors’ On-Chain Activity Hints At Bitcoin Price Cycle Top Above $166,000

This Vegan Billionaire Disrupted The Crypto Markets. Now He Wants To Tokenize Stocks

Black Americans Are Embracing Bitcoin To Make Up For Stolen Time

Rap Icon Nas Could Net $100M When Coinbase Lists on Nasdaq

The First Truly Native Cross-Chain DEX Is About To Go Live

Reminiscing On Past ‘Bitcoin Faucet’ Website That Gave Away 19,700 BTC For Free

3X As Many Crypto Figures Make It Onto Forbes 2021 Billionaires List As Last Year

Bubble Or A Drop In The Ocean? Putting Bitcoin’s $1 Trillion Milestone Into Perspective

Pension Funds And Insurance Firms Alive To Bitcoin Investment Proposal

Here’s Why April May Be The Best Month Yet For Bitcoin Price

Blockchain-Based Renewable Energy Marketplaces Gain Traction In 2021

Crypto Firms Got More Funding Last Quarter Than In All of 2020

Government-Backed Bitcoin Hash Wars Will Be The New Space Race

Lars Wood On Enhanced SAT Solvers Based Hashing Method For Bitcoin Mining

Morgan Stanley Adds Bitcoin Exposure To 12 Investment Funds

One BTC Will Be Worth A Lambo By 2022, And A Bugatti By 2023: Kraken CEO

Rocketing Bitcoin Price Provides Refuge For The Brave

Bitcoin Is 3rd Largest World Currency

Does BlockFi’s Risk Justify The Reward?

Crypto Media Runs With The Bulls As New Entrants Compete Against Established Brands

Bitcoin’s Never-Ending Bubble And Other Mysteries

The Last Dip Is The Deepest As Wife Leaves Husband For Buying More Bitcoin

Blockchain.com Raises $300 Million As Investors Find Other Ways Into Bitcoin

What Is BitClout? The Social Media Experiment Sparking Controversy On Twitter

Bitcoin Searches In Turkey Spike 566% After Turkish Lira Drops 14%

Crypto Is Banned In Morocco, But Bitcoin Purchases Are Soaring

Bitcoin Can Be Sent With A Tweet As Bottlepay Twitter App Goes Live

Rise of Crypto Market’s Quiet Giants Has Big Market Implications

Canadian Property Firm Buys Bitcoin In Hopes Of Eventually Scrapping Condo Fees

Bitcoin Price Gets Fed Boost But Bond Yields Could Play Spoilsport: Analysts

Bank of America Claims It Costs Just $93 Million To Move Bitcoin’s Price By 1%

Would A US Wealth Tax Push Millionaires To Bitcoin Adoption?

NYDIG Head Says Major Firms Will Announce Bitcoin ‘Milestones’ Next Week

Signal Encrypted Messenger Now Accepts Donations In Bitcoin

Bitcoin Is Now Worth More Than Visa And Mastercard Combined

Retail Bitcoin Customers Rival Wall Street Buyers As Mania Builds

Crypto’s Rising. So Are The Stakes For Governments Everywhere

Bitcoin Falls After Weekend Rally Pushes Token To Fresh Record

Oakland A’s Major League Baseball Team Now Accepts Bitcoin For Suites

Students In Georgia Set To Be Taught About Crypto At High School

What You Need To Know About Bitcoin Now

Bitcoin Winning Streak Now At 7 Days As Fresh Stimulus Keeps Inflation Bet Alive

Bitcoin Intraday Trading Pattern Emerges As Institutions Pile In

If 60/40 Recipe Sours, Maybe Stir In Some Bitcoin

Explaining Bitcoin’s Speculative Attack On The Dollar

VIX-Like Gauge For Bitcoin Sees Its First-Ever Options Trade

A Utopian Vision Gets A Blockchain Twist In Nevada

Crypto Influencers Scramble To Recover Twitter Accounts After Suspensions

Bitcoin Breaks Through $57,000 As Risk Appetite Revives

Analyzing Bitcoin’s Network Effect

US Government To Sell 0.7501 Bitcoin Worth $38,000 At Current Prices

Pro Traders Avoid Bitcoin Longs While Cautiously Watching DXY Strengthen

Bitcoin Hits Highest Level In Two Weeks As Big-Money Bets Flow

OG Status In Crypto Is A Liability

Bridging The Bitcoin Gender Gap: Crypto Lets Everyone Access Wealth

HODLing Early Leads To Relationship Troubles? Redditors Share Their Stories

Want To Be Rich? Bitcoin’s Limited Supply Cap Means You Only Need 0.01 BTC

You Can Earn 6%, 8%, Even 12% On A Bitcoin ‘Savings Account’—Yeah, Right

Egyptians Are Buying Bitcoin Despite Prohibitive New Banking Laws

Is March Historically A Bad Month For Bitcoin?

Bitcoin Falls 4% As Fed’s Powell Sees ‘Concern’ Over Rising Bond Yields

US Retailers See Millions In Lost Sales Due To Port Congestion, Shortage Of Containers

Pandemic-Relief Aid Boosts Household Income Which Causes Artificial Economic Stimulus

YouTube Suspends CoinDesk’s Channel Over Unspecified Violations

It’s Gates Versus Musk As World’s Richest Spar Over Bitcoin

Charlie Munger Is Sure Bitcoin Will Fail To Become A Global Medium Of Exchange

Bitcoin Is Minting Thousands Of Crypto ‘Diamond Hands’ Millionaires Complete W/Laser Eyes

Dubai’s IBC Group Pledges 100,000 Bitcoin ($4.8 Billion) 20% Of All Bitcoin, Largest So Far

Bitcoin’s Value Is All In The Eye Of The ‘Bithodler’

Bitcoin Is Hitting Record Highs. Why It’s Not Too Late To Dig For Digital Gold

$56.3K Bitcoin Price And $1Trillion Market Cap Signal BTC Is Here To Stay

Christie’s Auction House Will Now Accept Cryptocurrency

Why A Chinese New Year Bitcoin Sell-Off Did Not Happen This Year

The US Federal Reserve Will Adopt Bitcoin As A Reserve Asset

Motley Fool Adding $5M In Bitcoin To Its ‘10X Portfolio’ — Has A $500K Price Target

German Cannabis Company Hedges With Bitcoin In Case Euro Crashes

Bitcoin: What To Know Before Investing

China’s Cryptocurrency Stocks Left Behind In Bitcoin Frenzy

Bitcoin’s Epic Run Is Winning More Attention On Wall Street

Bitcoin Jumps To $50,000 As Record-Breaking Rally Accelerates

Bitcoin’s Volatility Should Burn Investors. It Hasn’t

Bitcoin’s Latest Record Run Is Less Volatile Than The 2017 Boom

Blockchain As A Replacement To The MERS (Mortgage Electronic Registration System)

The Ultimate Resource On “PriFi” Or Private Finance

Deutsche Bank To Offer Bitcoin Custody Services

BeanCoin Currency Casts Lifeline To Closed New Orleans Bars

Bitcoin Could Enter ‘Supercycle’ As Fed Balance Sheet Hits New Record High

Crypto Mogul Bets On ‘Meme Investing’ With Millions In GameStop

Iran’s Central Banks Acquires Bitcoin Even Though Lagarde Says Central Banks Will Not Hold Bitcoin

Bitcoin To Come To America’s Oldest Bank, BNY Mellon

Tesla’s Bitcoin-Equals-Cash View Isn’t Shared By All Crypto Owners

How A Lawsuit Against The IRS Is Trying To Expand Privacy For Crypto Users

Apple Should Launch Own Crypto Exchange, RBC Analyst Says

Bitcoin Hits $43K All-Time High As Tesla Invests $1.5 Billion In BTC

Bitcoin Bounces Off Top of Recent Price Range

Top Fiat Currencies By Market Capitalization VS Bitcoin

Bitcoin Eyes $50K Less Than A Month After BTC Price Broke Its 2017 All-Time High

Investors Piling Into Overvalued Crypto Funds Risk A Painful Exit

Parents Should Be Aware Of Their Children’s Crypto Tax Liabilities

Miami Mayor Says City Employees Should Be Able To Take Their Salaries In Bitcoin

Bitcoiners Get Last Laugh As IBM’s “Blockchain Not Bitcoin” Effort Goes Belly-up

Bitcoin Accounts Offer 3-12% Rates In A Low-Interest World

Analyst Says Bitcoin Price Sell-Off May Occur As Chinese New Year Approaches

Why The Crypto World Needs To Build An Amazon Of Its Own

Tor Project’s Crypto Donations Increased 23% In 2020

Social Trading Platform eToro Ended 2020 With $600M In Revenue

Bitcoin Billionaire Set To Run For California Governor

GameStop Investing Craze ‘Proof of Concept’ For Bitcoin Success

Bitcoin Entrepreneurs Install Mining Rigs In Cars. Will Trucks And Tractor Trailers Be Next?

Harvard, Yale, Brown Endowments Have Been Buying Bitcoin For At Least A Year

Bitcoin Return To $40,000 In Doubt As Flows To Key Fund Slow

Ultimate Resource For Leading Non-Profits Focused On Policy Issues Facing Cryptocurrencies

Regulate Cryptocurrencies? Not Yet

Check Out These Cryptocurrency Clubs And Bitcoin Groups!

Blockchain Brings Unicorns To Millennials

Crypto-Industry Prepares For Onslaught Of Public Listings

Bitcoin Core Lead Maintainer Steps Back, Encourages Decentralization

Here Are Very Bitcoiny Ways To Get Bitcoin Exposure

To Understand Bitcoin, Just Think of It As A Faith-Based Asset

Cryptos Won’t Work As Actual Currencies, UBS Economist Says

Older Investors Are Getting Into Crypto, New Survey Finds

Access Denied: Banks Seem Prone To Cryptophobia Despite Growing Adoption

Pro Traders Buy The Dip As Bulls Address A Trifecta Of FUD News Announcements

Andreas Antonopoulos And Others Debunk Bitcoin Double-Spend FUD

New Bitcoin Investors Explain Why They’re Buying At Record Prices

When Crypto And Traditional Investors Forget Fundamentals, The Market Is Broken

First Hyperledger-based Cryptocurrency Explodes 486% Overnight On Bittrex BTC Listing

Bitcoin Steady As Analysts Say Getting Back To $40,000 Is Key

Coinbase, MEVP Invest In Crypto-Asset Startup Rain

Synthetic Dreams: Wrapped Crypto Assets Gain Traction Amid Surging Market

Secure Bitcoin Self-Custody: Balancing Safety And Ease Of Use

UBS (A Totally Corrupt And Criminal Bank) Warns Clients Crypto Prices Can Actually Go To Zero

Bitcoin Swings Undermine CFO Case For Converting Cash To Crypto

CoinLab Cuts Deal With Mt. Gox Trustee Over Bitcoin Claims

Bitcoin Slides Under $35K Despite Biden Unveiling $1.9 Trillion Stimulus

Bitcoin Refuses To ‘Die’ As BTC Price Hits $40K Just Three Days After Crash

Ex-Ripple CTO Can’t Remember Password To Access $240M In Bitcoin

Financial Advisers Are Betting On Bitcoin As A Hedge

ECB President Christine Lagarde (French Convict) Says, Bitcoin Enables “Funny Business.”

German Police Shut Down Darknet Marketplace That Traded Bitcoin

Bitcoin Miner That’s Risen 1,400% Says More Regulation Is Needed

Bitcoin Rebounds While Leaving Everyone In Dark On True Worth

UK Treasury Calls For Feedback On Approach To Cryptocurrency And Stablecoin Regulation

What Crypto Users Need Know About Changes At The SEC

Where Does This 28% Bitcoin Price Drop Rank In History? Not Even In The Top 5

Seven Times That US Regulators Stepped Into Crypto In 2020

Retail Has Arrived As Paypal Clears $242M In Crypto Sales Nearly Double The Previous Record

Bitcoin’s Slide Dents Price Momentum That Dwarfed Everything

Does Bitcoin Boom Mean ‘Better Gold’ Or Bigger Bubble?

Bitcoin Whales Are Profiting As ‘Weak Hands’ Sell BTC After Price Correction

Crypto User Recovers Long-Lost Private Keys To Access $4M In Bitcoin

The Case For And Against Investing In Bitcoin

Bitcoin’s Wild Weekends Turn Efficient Market Theory Inside Out

Bitcoin Price Briefly Surpasses Market Cap Of Tencent

Broker Touts Exotic Bitcoin Bet To Squeeze Income From Crypto

Broker Touts Exotic Bitcoin Bet To Squeeze Income From Crypto

Tesla’s Crypto-Friendly CEO Is Now The Richest Man In The World

Crypto Market Cap Breaks $1 Trillion Following Jaw-Dropping Rally

Gamblers Could Use Bitcoin At Slot Machines With New Patent

Crypto Users Donate $400K To Julian Assange Defense As Mexico Proposes Asylum

Grayscale Ethereum Trust Fell 22% Despite Rally In Holdings

Bitcoin’s Bulls Should Fear Its Other Scarcity Problem

Ether Follows Bitcoin To Record High Amid Dizzying Crypto Rally

Retail Investors Are Largely Uninvolved As Bitcoin Price Chases $40K

Bitcoin Breaches $34,000 As Rally Extends Into New Year

Social Media Interest In Bitcoin Hits All-Time High

Bitcoin Price Quickly Climbs To $31K, Liquidating $100M Of Shorts

How Massive Bitcoin Buyer Activity On Coinbase Propelled BTC Price Past $32K

FinCEN Wants US Citizens To Disclose Offshore Crypto Holdings of $10K+

Governments Will Start To Hodl Bitcoin In 2021

Crypto-Linked Stocks Extend Rally That Produced 400% Gains

‘Bitcoin Liquidity Crisis’ — BTC Is Becoming Harder To Buy On Exchanges, Data Shows

Bitcoin Looks To Gain Traction In Payments

BTC Market Cap Now Over Half A Trillion Dollars. Major Weekly Candle Closed!!

Elon Musk And Satoshi Nakamoto Making Millionaires At Record Pace

Binance Enables SegWit Support For Bitcoin Deposits As Adoption Grows

Santoshi Nakamoto Delivers $24.5K Christmas Gift With Another New All-Time High

Bitcoin’s Rally Has Already Outlasted 2017’s Epic Run

Gifting Crypto To Loved Ones This Holiday? Educate Them First

Scaramucci’s SkyBridge Files With SEC To Launch Bitcoin Fund

Samsung Integrates Bitcoin Wallets And Exchange Into Galaxy Phones

HTC Smartphone Will Run A Full Bitcoin Node (#GotBitcoin?)

HTC’s New 5G Router Can Host A Full Bitcoin Node

Bitcoin Miners Are Heating Homes Free of Charge

Bitcoin Miners Will Someday Be Incorporated Into Household Appliances

Musk Inquires About Moving ‘Large Transactions’ To Bitcoin

How To Invest In Bitcoin: It Can Be Easy, But Watch Out For Fees

Megan Thee Stallion Gives Away $1 Million In Bitcoin

CoinFLEX Sets Up Short-Term Lending Facility For Crypto Traders

Wall Street Quants Pounce On Crytpo Industry And Some Are Not Sure What To Make Of It

Bitcoin Shortage As Wall Street FOMO Turns BTC Whales Into ‘Plankton’

Bitcoin Tops $22,000 And Strategists Say Rally Has Further To Go

Why Bitcoin Is Overpriced by More Than 50%

Kraken Exchange Will Integrate Bitcoin’s Lightning Network In 2021

New To Bitcoin? Stay Safe And Avoid These Common Scams

Andreas M. Antonopoulos And Simon Dixon Say Don’t Buy Bitcoin!

Famous Former Bitcoin Critics Who Conceded In 2020

Jim Cramer Bought Bitcoin While ‘Off Nicely From The Top’ In $17,000S

The Wealthy Are Jumping Into Bitcoin As Stigma Around Crypto Fades

WordPress Adds Official Ethereum Ad Plugin

France Moves To Ban Anonymous Crypto Accounts To Prevent Money Laundering

10 Predictions For 2021: China, Bitcoin, Taxes, Stablecoins And More

Movie Based On Darknet Market Silk Road Premiering In February

Crypto Funds Have Seen Record Investment Inflow In Recent Weeks

US Gov Is Bitcoin’s Last Remaining Adversary, Says Messari Founder

$1,200 US Stimulus Check Is Now Worth Almost $4,000 If Invested In Bitcoin

German Bank Launches Crypto Fund Covering Portfolio Of Digital Assets

World Governments Agree On Importance Of Crypto Regulation At G-7 Meeting

Why Some Investors Get Bitcoin So Wrong, And What That Says About Its Strengths

It’s Not About Data Ownership, It’s About Data Control, EFF Director Says

‘It Will Send BTC’ — On-Chain Analyst Says Bitcoin Hodlers Are Only Getting Stronger

Bitcoin Arrives On Wall Street: S&P Dow Jones Launching Crypto Indexes In 2021

Audio Streaming Giant Spotify Is Looking Into Crypto Payments

BlackRock (Assets Under Management $7.4 Trillion) CEO: Bitcoin Has Caught Our Attention

Bitcoin Moves $500K Around The Globe Every Second, Says Samson Mow

Pomp Talks Shark Tank’s Kevin O’leary Into Buying ‘A Little More’ Bitcoin

Bitcoin Is The Tulipmania That Refuses To Die

Ultimate Resource On Ethereum 2.0

Biden Should Integrate Bitcoin Into Us Financial System, Says Niall Ferguson

Bitcoin Is Winning The Monetary Revolution

Cash Is Trash, Dump Gold, Buy Bitcoin!

Bitcoin Price Sets New Record High Above $19,783

You Call That A Record? Bitcoin’s November Gains Are 3x Stock Market’s

Bitcoin Fights Back With Power, Speed and Millions of Users

Exchanges Outdo Auctions For Governments Cashing In Criminal Crypto, Says Exec

Coinbase CEO: Trump Administration May ‘Rush Out’ Burdensome Crypto Wallet Rules

Bitcoin Plunges Along With Other Coins Providing For A Major Black Friday Sale Opportunity

The Most Bullish Bitcoin Arguments For Your Thanksgiving Table

‘Bitcoin Tuesday’ To Become One Of The Largest-Ever Crypto Donation Events

World’s First 24/7 Crypto Call-In Station!!!

Bitcoin Trades Again Near Record, Driven By New Group Of Buyers

Friendliest Of Them All? These Could Be The Best Countries For Crypto

Bitcoin Price Doubles Since The Halving, With Just 3.4M Bitcoin Left For Buyers

First Company-Sponsored Bitcoin Retirement Plans Launched In US

Poker Players Are Enhancing Winnings By Cashing Out In Bitcoin

Crypto-Friendly Brooks Gets Nod To Serve 5-Year Term Leading Bank Regulator

The Bitcoin Comeback: Is Crypto Finally Going Mainstream?

The Dark Future Where Payments Are Politicized And Bitcoin Wins

Mexico’s 3rd Richest Man Reveals BTC Holdings As Bitcoin Breaches $18,000

Ultimate Resource On Mike Novogratz And Galaxy Digital’s Bitcoin News

Bitcoin’s Gunning For A Record And No One’s Talking About It

Simple Steps To Keep Your Crypto Safe

US Company Now Lets Travelers Pay For Passports With Bitcoin

Billionaire Hedge Fund Investor Stanley Druckenmiller Says He Owns Bitcoin In CNBC Interview

China’s UnionPay And Korea’s Danal To Launch Crypto-Supporting Digital Card #GotBitcoin

Bitcoin Is Back Trading Near Three-Year Highs

Bitcoin Transaction Fees Rise To 28-Month High As Hashrate Drops Amid Price Rally

Market Is Proving Bitcoin Is ‘Ultimate Safe Haven’ — Anthony Pompliano

3 Reasons Why Bitcoin Price Suddenly Dropping Below $13,000 Isn’t Bearish

Bitcoin Resurgence Leaves Institutional Acceptance Unanswered

WordPress Content Can Now Be Timestamped On Ethereum

PayPal To Offer Crypto Payments Starting In 2021 (A-Z) (#GotBitcoin?)

As Bitcoin Approaches $13,000 It Breaks Correlation With Equities

Crypto M&A Surges Past 2019 Total As Rest of World Eclipses U.S. (#GotBitcoin?)

How HBCUs Are Prepping Black Students For Blockchain Careers

Why Every US Congressman Just Got Sent Some ‘American’ Bitcoin

CME Sounding Out Crypto Traders To Gauge Market Demand For Ether Futures, Options

Caitlin Long On Bitcoin, Blockchain And Rehypothecation (#GotBitcoin?)

Bitcoin Drops To $10,446.83 As CFTC Charges BitMex With Illegally Operating Derivatives Exchange

BitcoinACKs Lets You Track Bitcoin Development And Pay Coders For Their Work

One Of Hal Finney’s Lost Contributions To Bitcoin Core To Be ‘Resurrected’ (#GotBitcoin?)

Cross-chain Money Markets, Latest Attempt To Bring Liquidity To DeFi

Memes Mean Mad Money. Those Silly Defi Memes, They’re Really Important (#GotBitcoin?)

Bennie Overton’s Story About Our Corrupt U.S. Judicial, Global Financial Monetary System And Bitcoin

Stop Fucking Around With Public Token Airdrops In The United States (#GotBitcoin?)

Mad Money’s Jim Cramer Will Invest 1% Of Net Worth In Bitcoin Says, “Gold Is Dangerous”

State-by-state Licensing For Crypto And Payments Firms In The Us Just Got Much Easier (#GotBitcoin?)

Bitcoin (BTC) Ranks As World 6Th Largest Currency

Pomp Claims He Convinced Jim Cramer To Buy Bitcoin

Traditional Investors View Bitcoin As If It Were A Technology Stock

Mastercard Releases Platform Enabling Central Banks To Test Digital Currencies (#GotBitcoin?)

Being Black On Wall Street. Top Black Executives Speak Out About Racism (#GotBitcoin?)

Tesla And Bitcoin Are The Most Popular Assets On TradingView (#GotBitcoin?)

From COVID Generation To Crypto Generation (#GotBitcoin?)

Right-Winger Tucker Carlson Causes Grayscale Investments To Pull Bitcoin Ads

Bitcoin Has Lost Its Way: Here’s How To Return To Crypto’s Subversive Roots

Cross Chain Is Here: NEO, ONT, Cosmos And NEAR Launch Interoperability Protocols (#GotBitcoin?)

Crypto Trading Products Enter The Mainstream With A Number Of Inherent Advantages (#GotBitcoin?)

Crypto Goes Mainstream With TV, Newspaper Ads (#GotBitcoin?)

A Guarded Generation: How Millennials View Money And Investing (#GotBitcoin?)

Blockchain-Backed Social Media Brings More Choice For Users

California Moves Forward With Digital Asset Bill (#GotBitcoin?)

Walmart Adds Crypto Cashback Through Shopping Loyalty Platform StormX (#GotBitcoin?)

Congressman Tom Emmer To Lead First-Ever Crypto Town Hall (#GotBitcoin?)

Why It’s Time To Pay Attention To Mexico’s Booming Crypto Market (#GotBitcoin?)

The Assets That Matter Most In Crypto (#GotBitcoin?)

Ultimate Resource On Non-Fungible Tokens

Bitcoin Community Highlights Double-Standard Applied Deutsche Bank Epstein Scandal

Blockchain Makes Strides In Diversity. However, Traditional Tech Industry Not-S0-Much (#GotBitcoin?)

An Israeli Blockchain Startup Claims It’s Invented An ‘Undo’ Button For BTC Transactions

After Years of Resistance, BitPay Adopts SegWit For Cheaper Bitcoin Transactions

US Appeals Court Allows Warrantless Search of Blockchain, Exchange Data

Central Bank Rate Cuts Mean ‘World Has Gone Zimbabwe’

This Researcher Says Bitcoin’s Elliptic Curve Could Have A Secret Backdoor

China Discovers 4% Of Its Reserves Or 83 Tons Of It’s Gold Bars Are Fake (#GotBitcoin?)

Former Legg Mason Star Bill Miller And Bloomberg Are Optimistic About Bitcoin’s Future

Yield Chasers Are Yield Farming In Crypto-Currencies (#GotBitcoin?)

Australia Post Office Now Lets Customers Buy Bitcoin At Over 3,500 Outlets

Anomaly On Bitcoin Sidechain Results In Brief Security Lapse

SEC And DOJ Charges Lobbying Kingpin Jack Abramoff And Associate For Money Laundering

Veteran Commodities Trader Chris Hehmeyer Goes All In On Crypto (#GotBitcoin?)

Activists Document Police Misconduct Using Decentralized Protocol (#GotBitcoin?)

Supposedly, PayPal, Venmo To Roll Out Crypto Buying And Selling (#GotBitcoin?)

Industry Leaders Launch PayID, The Universal ID For Payments (#GotBitcoin?)

Crypto Quant Fund Debuts With $23M In Assets, $2.3B In Trades (#GotBitcoin?)

The Queens Politician Who Wants To Give New Yorkers Their Own Crypto

Why Does The SEC Want To Run Bitcoin And Ethereum Nodes?

US Drug Agency Failed To Properly Supervise Agent Who Stole $700,000 In Bitcoin In 2015

Layer 2 Will Make Bitcoin As Easy To Use As The Dollar, Says Kraken CEO

Bootstrapping Mobile Mesh Networks With Bitcoin Lightning

Nevermind Coinbase — Big Brother Is Already Watching Your Coins (#GotBitcoin?)

BitPay’s Prepaid Mastercard Launches In US to Make Crypto Accessible (#GotBitcoin?)

Germany’s Deutsche Borse Exchange To List New Bitcoin Exchange-Traded Product

‘Bitcoin Billionaires’ Movie To Tell Winklevoss Bros’ Crypto Story

US Pentagon Created A War Game To Fight The Establishment With BTC (#GotBitcoin?)

JPMorgan Provides Banking Services To Crypto Exchanges Coinbase And Gemini (#GotBitcoin?)

Bitcoin Advocates Cry Foul As US Fed Buying ETFs For The First Time

Final Block Mined Before Halving Contained Reminder of BTC’s Origins (#GotBitcoin?)

Meet Brian Klein, Crypto’s Own ‘High-Stakes’ Trial Attorney (#GotBitcoin?)

3 Reasons For The Bitcoin Price ‘Halving Dump’ From $10K To $8.1K

Bitcoin Outlives And Outlasts Naysayers And First Website That Declared It Dead Back In 2010

Hedge Fund Pioneer Turns Bullish On Bitcoin Amid ‘Unprecedented’ Monetary Inflation

Antonopoulos: Chainalysis Is Helping World’s Worst Dictators & Regimes (#GotBitcoin?)

Survey Shows Many BTC Holders Use Hardware Wallet, Have Backup Keys (#GotBitcoin?)

Iran Ditches The Rial Amid Hyperinflation As Localbitcoins Seem To Trade Near $35K

Buffett ‘Killed His Reputation’ by Being Stupid About BTC, Says Max Keiser (#GotBitcoin?)

Blockfolio Quietly Patches Years-Old Security Hole That Exposed Source Code (#GotBitcoin?)

Bitcoin Won As Store of Value In Coronavirus Crisis — Hedge Fund CEO

Decentralized VPN Gaining Steam At 100,000 Users Worldwide (#GotBitcoin?)

Crypto Exchange Offers Credit Lines so Institutions Can Trade Now, Pay Later (#GotBitcoin?)

Zoom Develops A Cryptocurrency Paywall To Reward Creators Video Conferencing Sessions (#GotBitcoin?)

Bitcoin Startup Purse.io And Major Bitcoin Cash Partner To Shut Down After 6-Year Run

Open Interest In CME Bitcoin Futures Rises 70% As Institutions Return To Market

Square’s Users Can Route Stimulus Payments To BTC-Friendly Cash App

$1.1 Billion BTC Transaction For Only $0.68 Demonstrates Bitcoin’s Advantage Over Banks

Bitcoin Could Become Like ‘Prison Cigarettes’ Amid Deepening Financial Crisis

Bitcoin Holds Value As US Debt Reaches An Unfathomable $24 Trillion

How To Get Money (Crypto-currency) To People In An Emergency, Fast

Bitcoin Miner Manufacturers Mark Down Prices Ahead of Halving

Privacy-Oriented Browsers Gain Traction (#GotBitcoin?)

‘Breakthrough’ As Lightning Uses Web’s Forgotten Payment Code (#GotBitcoin?)

Bitcoin Starts Quarter With Price Down Just 10% YTD vs U.S. Stock’s Worst Quarter Since 2008

Bitcoin Enthusiasts, Liberal Lawmakers Cheer A Fed-Backed Digital Dollar

Crypto-Friendly Bank Revolut Launches In The US (#GotBitcoin?)

The CFTC Just Defined What ‘Actual Delivery’ of Crypto Should Look Like (#GotBitcoin?)

Crypto CEO Compares US Dollar To Onecoin Scam As Fed Keeps Printing (#GotBitcoin?)

Stuck In Quarantine? Become A Blockchain Expert With These Online Courses (#GotBitcoin?)

Bitcoin, Not Governments Will Save the World After Crisis, Tim Draper Says

Crypto Analyst Accused of Photoshopping Trade Screenshots (#GotBitcoin?)

QE4 Begins: Fed Cuts Rates, Buys $700B In Bonds; Bitcoin Rallies 7.7%

Mike Novogratz And Andreas Antonopoulos On The Bitcoin Crash

Amid Market Downturn, Number of People Owning 1 BTC Hits New Record (#GotBitcoin?)

Fatburger And Others Feed $30 Million Into Ethereum For New Bond Offering (#GotBitcoin?)

Pornhub Will Integrate PumaPay Recurring Subscription Crypto Payments (#GotBitcoin?)

Intel SGX Vulnerability Discovered, Cryptocurrency Keys Threatened

Bitcoin’s Plunge Due To Manipulation, Traditional Markets Falling or PlusToken Dumping?

Countries That First Outlawed Crypto But Then Embraced It (#GotBitcoin?)

Bitcoin Maintains Gains As Global Equities Slide, US Yield Hits Record Lows

HTC’s New 5G Router Can Host A Full Bitcoin Node

India Supreme Court Lifts RBI Ban On Banks Servicing Crypto Firms (#GotBitcoin?)

Analyst Claims 98% of Mining Rigs Fail to Verify Transactions (#GotBitcoin?)

Blockchain Storage Offers Security, Data Transparency And immutability. Get Over it!

Black Americans & Crypto (#GotBitcoin?)

Coinbase Wallet Now Allows To Send Crypto Through Usernames (#GotBitcoin)

New ‘Simpsons’ Episode Features Jim Parsons Giving A Crypto Explainer For The Masses (#GotBitcoin?)

Crypto-currency Founder Met With Warren Buffett For Charity Lunch (#GotBitcoin?)

Bitcoin’s Potential To Benefit The African And African-American Community

Coinbase Becomes Direct Visa Card Issuer With Principal Membership

Bitcoin Achieves Major Milestone With Half A Billion Transactions Confirmed

Jill Carlson, Meltem Demirors Back $3.3M Round For Non-Custodial Settlement Protocol Arwen

Crypto Companies Adopt Features Similar To Banks (Only Better) To Drive Growth (#GotBitcoin?)

Top Graphics Cards That Will Turn A Crypto Mining Profit (#GotBitcoin?)

Bitcoin Usage Among Merchants Is Up, According To Data From Coinbase And BitPay

Top 10 Books Recommended by Crypto (#Bitcoin) Thought Leaders

Twitter Adds Bitcoin Emoji, Jack Dorsey Suggests Unicode Does The Same

Bitcoiners Are Now Into Fasting. Read This Article To Find Out Why

You Can Now Donate Bitcoin Or Fiat To Show Your Support For All Of Our Valuable Content

2019’s Top 10 Institutional Actors In Crypto (#GotBitcoin?)

What Does Twitter’s New Decentralized Initiative Mean? (#GotBitcoin?)

Crypto-Friendly Silvergate Bank Goes Public On New York Stock Exchange (#GotBitcoin?)

Bitcoin’s Best Q1 Since 2013 To ‘Escalate’ If $9.5K Is Broken

Billionaire Investor Tim Draper: If You’re a Millennial, Buy Bitcoin

What Are Lightning Wallets Doing To Help Onboard New Users? (#GotBitcoin?)

If You Missed Out On Investing In Amazon, Bitcoin Might Be A Second Chance For You (#GotBitcoin?)

2020 And Beyond: Bitcoin’s Potential Protocol (Privacy And Scalability) Upgrades (#GotBitcoin?)

US Deficit Will Be At Least 6 Times Bitcoin Market Cap — Every Year (#GotBitcoin?)

Central Banks Warm To Issuing Digital Currencies (#GotBitcoin?)

Meet The Crypto Angel Investor Running For Congress In Nevada (#GotBitcoin?)

Introducing BTCPay Vault – Use Any Hardware Wallet With BTCPay And Its Full Node (#GotBitcoin?)

How Not To Lose Your Coins In 2020: Alternative Recovery Methods (#GotBitcoin?)

H.R.5635 – Virtual Currency Tax Fairness Act of 2020 ($200.00 Limit) 116th Congress (2019-2020)

Adam Back On Satoshi Emails, Privacy Concerns And Bitcoin’s Early Days

The Prospect of Using Bitcoin To Build A New International Monetary System Is Getting Real

How To Raise Funds For Australia Wildfire Relief Efforts (Using Bitcoin And/Or Fiat )

Former Regulator Known As ‘Crypto Dad’ To Launch Digital-Dollar Think Tank (#GotBitcoin?)

Currency ‘Cold War’ Takes Center Stage At Pre-Davos Crypto Confab (#GotBitcoin?)

A Blockchain-Secured Home Security Camera Won Innovation Awards At CES 2020 Las Vegas

Bitcoin’s Had A Sensational 11 Years (#GotBitcoin?)

Sergey Nazarov And The Creation Of A Decentralized Network Of Oracles

Google Suspends MetaMask From Its Play App Store, Citing “Deceptive Services”

Christmas Shopping: Where To Buy With Crypto This Festive Season

At 8,990,000% Gains, Bitcoin Dwarfs All Other Investments This Decade

Coinbase CEO Armstrong Wins Patent For Tech Allowing Users To Email Bitcoin

Bitcoin Has Got Society To Think About The Nature Of Money

How DeFi Goes Mainstream In 2020: Focus On Usability (#GotBitcoin?)

Dissidents And Activists Have A Lot To Gain From Bitcoin, If Only They Knew It (#GotBitcoin?)

At A Refugee Camp In Iraq, A 16-Year-Old Syrian Is Teaching Crypto Basics

Bitclub Scheme Busted In The US, Promising High Returns From Mining

Bitcoin Advertised On French National TV

Germany: New Proposed Law Would Legalize Banks Holding Bitcoin

How To Earn And Spend Bitcoin On Black Friday 2019

The Ultimate List of Bitcoin Developments And Accomplishments

Charities Put A Bitcoin Twist On Giving Tuesday

Family Offices Finally Accept The Benefits of Investing In Bitcoin

An Army Of Bitcoin Devs Is Battle-Testing Upgrades To Privacy And Scaling

Bitcoin ‘Carry Trade’ Can Net Annual Gains With Little Risk, Says PlanB

Max Keiser: Bitcoin’s ‘Self-Settlement’ Is A Revolution Against Dollar

Blockchain Can And Will Replace The IRS

China Seizes The Blockchain Opportunity. How Should The US Respond? (#GotBitcoin?)

Jack Dorsey: You Can Buy A Fraction Of Berkshire Stock Or ‘Stack Sats’

Bitcoin Price Skyrockets $500 In Minutes As Bakkt BTC Contracts Hit Highs

Bitcoin’s Irreversibility Challenges International Private Law: Legal Scholar

Bitcoin Has Already Reached 40% Of Average Fiat Currency Lifespan

Yes, Even Bitcoin HODLers Can Lose Money In The Long-Term: Here’s How (#GotBitcoin?)

Unicef To Accept Donations In Bitcoin (#GotBitcoin?)

Former Prosecutor Asked To “Shut Down Bitcoin” And Is Now Face Of Crypto VC Investing (#GotBitcoin?)

Switzerland’s ‘Crypto Valley’ Is Bringing Blockchain To Zurich

Next Bitcoin Halving May Not Lead To Bull Market, Says Bitmain CEO

Bitcoin Developer Amir Taaki, “We Can Crash National Economies” (#GotBitcoin?)

Veteran Crypto And Stocks Trader Shares 6 Ways To Invest And Get Rich

Is Chainlink Blazing A Trail Independent Of Bitcoin?

Nearly $10 Billion In BTC Is Held In Wallets Of 8 Crypto Exchanges (#GotBitcoin?)

SEC Enters Settlement Talks With Alleged Fraudulent Firm Veritaseum (#GotBitcoin?)

Blockstream’s Samson Mow: Bitcoin’s Block Size Already ‘Too Big’

Attorneys Seek Bank Of Ireland Execs’ Testimony Against OneCoin Scammer (#GotBitcoin?)

OpenLibra Plans To Launch Permissionless Fork Of Facebook’s Stablecoin (#GotBitcoin?)

Tiny $217 Options Trade On Bitcoin Blockchain Could Be Wall Street’s Death Knell (#GotBitcoin?)

Class Action Accuses Tether And Bitfinex Of Market Manipulation (#GotBitcoin?)

Sharia Goldbugs: How ISIS Created A Currency For World Domination (#GotBitcoin?)

Bitcoin Eyes Demand As Hong Kong Protestors Announce Bank Run (#GotBitcoin?)

How To Securely Transfer Crypto To Your Heirs

‘Gold-Backed’ Crypto Token Promoter Karatbars Investigated By Florida Regulators (#GotBitcoin?)

Crypto News From The Spanish-Speaking World (#GotBitcoin?)

Financial Services Giant Morningstar To Offer Ratings For Crypto Assets (#GotBitcoin?)

‘Gold-Backed’ Crypto Token Promoter Karatbars Investigated By Florida Regulators (#GotBitcoin?)

The Original Sins Of Cryptocurrencies (#GotBitcoin?)

Bitcoin Is The Fraud? JPMorgan Metals Desk Fixed Gold Prices For Years (#GotBitcoin?)

Israeli Startup That Allows Offline Crypto Transactions Secures $4M (#GotBitcoin?)

[PSA] Non-genuine Trezor One Devices Spotted (#GotBitcoin?)

Bitcoin Stronger Than Ever But No One Seems To Care: Google Trends (#GotBitcoin?)

First-Ever SEC-Qualified Token Offering In US Raises $23 Million (#GotBitcoin?)

You Can Now Prove A Whole Blockchain With One Math Problem – Really

Crypto Mining Supply Fails To Meet Market Demand In Q2: TokenInsight

$2 Billion Lost In Mt. Gox Bitcoin Hack Can Be Recovered, Lawyer Claims (#GotBitcoin?)

Fed Chair Says Agency Monitoring Crypto But Not Developing Its Own (#GotBitcoin?)

Wesley Snipes Is Launching A Tokenized $25 Million Movie Fund (#GotBitcoin?)

Mystery 94K BTC Transaction Becomes Richest Non-Exchange Address (#GotBitcoin?)

A Crypto Fix For A Broken International Monetary System (#GotBitcoin?)

Four Out Of Five Top Bitcoin QR Code Generators Are Scams: Report (#GotBitcoin?)

Waves Platform And The Abyss To Jointly Launch Blockchain-Based Games Marketplace (#GotBitcoin?)

Bitmain Ramps Up Power And Efficiency With New Bitcoin Mining Machine (#GotBitcoin?)

Ledger Live Now Supports Over 1,250 Ethereum-Based ERC-20 Tokens (#GotBitcoin?)

Miss Finland: Bitcoin’s Risk Keeps Most Women Away From Cryptocurrency (#GotBitcoin?)

Artist Akon Loves BTC And Says, “It’s Controlled By The People” (#GotBitcoin?)

Ledger Live Now Supports Over 1,250 Ethereum-Based ERC-20 Tokens (#GotBitcoin?)

Co-Founder Of LinkedIn Presents Crypto Rap Video: Hamilton Vs. Satoshi (#GotBitcoin?)

Crypto Insurance Market To Grow, Lloyd’s Of London And Aon To Lead (#GotBitcoin?)

No ‘AltSeason’ Until Bitcoin Breaks $20K, Says Hedge Fund Manager (#GotBitcoin?)

NSA Working To Develop Quantum-Resistant Cryptocurrency: Report (#GotBitcoin?)

Custody Provider Legacy Trust Launches Crypto Pension Plan (#GotBitcoin?)

Vaneck, SolidX To Offer Limited Bitcoin ETF For Institutions Via Exemption (#GotBitcoin?)

Russell Okung: From NFL Superstar To Bitcoin Educator In 2 Years (#GotBitcoin?)

Bitcoin Miners Made $14 Billion To Date Securing The Network (#GotBitcoin?)

Why Does Amazon Want To Hire Blockchain Experts For Its Ads Division?

Argentina’s Economy Is In A Technical Default (#GotBitcoin?)

Blockchain-Based Fractional Ownership Used To Sell High-End Art (#GotBitcoin?)

Portugal Tax Authority: Bitcoin Trading And Payments Are Tax-Free (#GotBitcoin?)

Bitcoin ‘Failed Safe Haven Test’ After 7% Drop, Peter Schiff Gloats (#GotBitcoin?)

Bitcoin Dev Reveals Multisig UI Teaser For Hardware Wallets, Full Nodes (#GotBitcoin?)

Bitcoin Price: $10K Holds For Now As 50% Of CME Futures Set To Expire (#GotBitcoin?)

Bitcoin Realized Market Cap Hits $100 Billion For The First Time (#GotBitcoin?)

Stablecoins Begin To Look Beyond The Dollar (#GotBitcoin?)

Bank Of England Governor: Libra-Like Currency Could Replace US Dollar (#GotBitcoin?)

Binance Reveals ‘Venus’ — Its Own Project To Rival Facebook’s Libra (#GotBitcoin?)

The Real Benefits Of Blockchain Are Here. They’re Being Ignored (#GotBitcoin?)

CommBank Develops Blockchain Market To Boost Biodiversity (#GotBitcoin?)

SEC Approves Blockchain Tech Startup Securitize To Record Stock Transfers (#GotBitcoin?)

SegWit Creator Introduces New Language For Bitcoin Smart Contracts (#GotBitcoin?)

You Can Now Earn Bitcoin Rewards For Postmates Purchases (#GotBitcoin?)

Bitcoin Price ‘Will Struggle’ In Big Financial Crisis, Says Investor (#GotBitcoin?)

Fidelity Charitable Received Over $100M In Crypto Donations Since 2015 (#GotBitcoin?)

Would Blockchain Better Protect User Data Than FaceApp? Experts Answer (#GotBitcoin?)

Just The Existence Of Bitcoin Impacts Monetary Policy (#GotBitcoin?)

What Are The Biggest Alleged Crypto Heists And How Much Was Stolen? (#GotBitcoin?)

IRS To Cryptocurrency Owners: Come Clean, Or Else!

Coinbase Accidentally Saves Unencrypted Passwords Of 3,420 Customers (#GotBitcoin?)

Bitcoin Is A ‘Chaos Hedge, Or Schmuck Insurance‘ (#GotBitcoin?)

Bakkt Announces September 23 Launch Of Futures And Custody

Coinbase CEO: Institutions Depositing $200-400M Into Crypto Per Week (#GotBitcoin?)

Researchers Find Monero Mining Malware That Hides From Task Manager (#GotBitcoin?)

Crypto Dusting Attack Affects Nearly 300,000 Addresses (#GotBitcoin?)

A Case For Bitcoin As Recession Hedge In A Diversified Investment Portfolio (#GotBitcoin?)

SEC Guidance Gives Ammo To Lawsuit Claiming XRP Is Unregistered Security (#GotBitcoin?)

15 Countries To Develop Crypto Transaction Tracking System: Report (#GotBitcoin?)

US Department Of Commerce Offering 6-Figure Salary To Crypto Expert (#GotBitcoin?)

Mastercard Is Building A Team To Develop Crypto, Wallet Projects (#GotBitcoin?)

Canadian Bitcoin Educator Scams The Scammer And Donates Proceeds (#GotBitcoin?)

Amazon Wants To Build A Blockchain For Ads, New Job Listing Shows (#GotBitcoin?)

Shield Bitcoin Wallets From Theft Via Time Delay (#GotBitcoin?)

Blockstream Launches Bitcoin Mining Farm With Fidelity As Early Customer (#GotBitcoin?)

Commerzbank Tests Blockchain Machine To Machine Payments With Daimler (#GotBitcoin?)

Man Takes Bitcoin Miner Seller To Tribunal Over Electricity Bill And Wins (#GotBitcoin?)

Bitcoin’s Computing Power Sets Record As Over 100K New Miners Go Online (#GotBitcoin?)

Walmart Coin And Libra Perform Major Public Relations For Bitcoin (#GotBitcoin?)

Judge Says Buying Bitcoin Via Credit Card Not Necessarily A Cash Advance (#GotBitcoin?)

Poll: If You’re A Stockowner Or Crypto-Currency Holder. What Will You Do When The Recession Comes?

1 In 5 Crypto Holders Are Women, New Report Reveals (#GotBitcoin?)

Beating Bakkt, Ledgerx Is First To Launch ‘Physical’ Bitcoin Futures In Us (#GotBitcoin?)

Facebook Warns Investors That Libra Stablecoin May Never Launch (#GotBitcoin?)

Government Money Printing Is ‘Rocket Fuel’ For Bitcoin (#GotBitcoin?)

Bitcoin-Friendly Square Cash App Stock Price Up 56% In 2019 (#GotBitcoin?)

Safeway Shoppers Can Now Get Bitcoin Back As Change At 894 US Stores (#GotBitcoin?)

TD Ameritrade CEO: There’s ‘Heightened Interest Again’ With Bitcoin (#GotBitcoin?)

Venezuela Sets New Bitcoin Volume Record Thanks To 10,000,000% Inflation (#GotBitcoin?)

Newegg Adds Bitcoin Payment Option To 73 More Countries (#GotBitcoin?)

China’s Schizophrenic Relationship With Bitcoin (#GotBitcoin?)

More Companies Build Products Around Crypto Hardware Wallets (#GotBitcoin?)

Bakkt Is Scheduled To Start Testing Its Bitcoin Futures Contracts Today (#GotBitcoin?)

Bitcoin Network Now 8 Times More Powerful Than It Was At $20K Price (#GotBitcoin?)

Crypto Exchange BitMEX Under Investigation By CFTC: Bloomberg (#GotBitcoin?)

“Bitcoin An ‘Unstoppable Force,” Says US Congressman At Crypto Hearing (#GotBitcoin?)

Bitcoin Network Is Moving $3 Billion Daily, Up 210% Since April (#GotBitcoin?)

Cryptocurrency Startups Get Partial Green Light From Washington

Fundstrat’s Tom Lee: Bitcoin Pullback Is Healthy, Fewer Searches Аre Good (#GotBitcoin?)

Bitcoin Lightning Nodes Are Snatching Funds From Bad Actors (#GotBitcoin?)

The Provident Bank Now Offers Deposit Services For Crypto-Related Entities (#GotBitcoin?)

Bitcoin Could Help Stop News Censorship From Space (#GotBitcoin?)

US Sanctions On Iran Crypto Mining — Inevitable Or Impossible? (#GotBitcoin?)

US Lawmaker Reintroduces ‘Safe Harbor’ Crypto Tax Bill In Congress (#GotBitcoin?)

EU Central Bank Won’t Add Bitcoin To Reserves — Says It’s Not A Currency (#GotBitcoin?)

The Miami Dolphins Now Accept Bitcoin And Litecoin Crypt-Currency Payments (#GotBitcoin?)

Trump Bashes Bitcoin And Alt-Right Is Mad As Hell (#GotBitcoin?)

Goldman Sachs Ramps Up Development Of New Secret Crypto Project (#GotBitcoin?)

Blockchain And AI Bond, Explained (#GotBitcoin?)

Grayscale Bitcoin Trust Outperformed Indexes In First Half Of 2019 (#GotBitcoin?)

XRP Is The Worst Performing Major Crypto Of 2019 (GotBitcoin?)

Bitcoin Back Near $12K As BTC Shorters Lose $44 Million In One Morning (#GotBitcoin?)

As Deutsche Bank Axes 18K Jobs, Bitcoin Offers A ‘Plan ฿”: VanEck Exec (#GotBitcoin?)

Argentina Drives Global LocalBitcoins Volume To Highest Since November (#GotBitcoin?)

‘I Would Buy’ Bitcoin If Growth Continues — Investment Legend Mobius (#GotBitcoin?)

Lawmakers Push For New Bitcoin Rules (#GotBitcoin?)

Facebook’s Libra Is Bad For African Americans (#GotBitcoin?)

Crypto Firm Charity Announces Alliance To Support Feminine Health (#GotBitcoin?)

Canadian Startup Wants To Upgrade Millions Of ATMs To Sell Bitcoin (#GotBitcoin?)

Trump Says US ‘Should Match’ China’s Money Printing Game (#GotBitcoin?)

Casa Launches Lightning Node Mobile App For Bitcoin Newbies (#GotBitcoin?)

Bitcoin Rally Fuels Market In Crypto Derivatives (#GotBitcoin?)

World’s First Zero-Fiat ‘Bitcoin Bond’ Now Available On Bloomberg Terminal (#GotBitcoin?)

Buying Bitcoin Has Been Profitable 98.2% Of The Days Since Creation (#GotBitcoin?)

Another Crypto Exchange Receives License For Crypto Futures

From ‘Ponzi’ To ‘We’re Working On It’ — BIS Chief Reverses Stance On Crypto (#GotBitcoin?)

These Are The Cities Googling ‘Bitcoin’ As Interest Hits 17-Month High (#GotBitcoin?)

Venezuelan Explains How Bitcoin Saves His Family (#GotBitcoin?)

Quantum Computing Vs. Blockchain: Impact On Cryptography

This Fund Is Riding Bitcoin To Top (#GotBitcoin?)

Bitcoin’s Surge Leaves Smaller Digital Currencies In The Dust (#GotBitcoin?)

Bitcoin Exchange Hits $1 Trillion In Trading Volume (#GotBitcoin?)

Bitcoin Breaks $200 Billion Market Cap For The First Time In 17 Months (#GotBitcoin?)

You Can Now Make State Tax Payments In Bitcoin (#GotBitcoin?)

Religious Organizations Make Ideal Places To Mine Bitcoin (#GotBitcoin?)

Goldman Sacs And JP Morgan Chase Finally Concede To Crypto-Currencies (#GotBitcoin?)

Bitcoin Heading For Fifth Month Of Gains Despite Price Correction (#GotBitcoin?)

Breez Reveals Lightning-Powered Bitcoin Payments App For IPhone (#GotBitcoin?)

Big Four Auditing Firm PwC Releases Cryptocurrency Auditing Software (#GotBitcoin?)

Amazon-Owned Twitch Quietly Brings Back Bitcoin Payments (#GotBitcoin?)

JPMorgan Will Pilot ‘JPM Coin’ Stablecoin By End Of 2019: Report (#GotBitcoin?)

Is There A Big Short In Bitcoin? (#GotBitcoin?)

Coinbase Hit With Outage As Bitcoin Price Drops $1.8K In 15 Minutes

Samourai Wallet Releases Privacy-Enhancing CoinJoin Feature (#GotBitcoin?)

There Are Now More Than 5,000 Bitcoin ATMs Around The World (#GotBitcoin?)

You Can Now Get Bitcoin Rewards When Booking At Hotels.Com (#GotBitcoin?)

North America’s Largest Solar Bitcoin Mining Farm Coming To California (#GotBitcoin?)

Bitcoin On Track For Best Second Quarter Price Gain On Record (#GotBitcoin?)

Bitcoin Hash Rate Climbs To New Record High Boosting Network Security (#GotBitcoin?)

Bitcoin Exceeds 1Million Active Addresses While Coinbase Custodies $1.3B In Assets

Why Bitcoin’s Price Suddenly Surged Back $5K (#GotBitcoin?)

Bitcoin’s Lightning Comes To Apple Smartwatches With New App (#GotBitcoin?)

E-Trade To Offer Crypto Trading (#GotBitcoin)

Bitfinex Used Tether Reserves To Mask Missing $850 Million, Probe Finds (#GotBitcoin?)

21-Year-Old Jailed For 10 Years After Stealing $7.5M In Crypto By Hacking Cell Phones (#GotBitcoin?)

You Can Now Shop With Bitcoin On Amazon Using Lightning (#GotBitcoin?)

Afghanistan, Tunisia To Issue Sovereign Bonds In Bitcoin, Bright Future Ahead (#GotBitcoin?)

Crypto Faithful Say Blockchain Can Remake Securities Market Machinery (#GotBitcoin?)

Disney In Talks To Acquire The Owner Of Crypto Exchanges Bitstamp And Korbit (#GotBitcoin?)

Crypto Exchange Gemini Rolls Out Native Wallet Support For SegWit Bitcoin Addresses (#GotBitcoin?)

Binance Delists Bitcoin SV, CEO Calls Craig Wright A ‘Fraud’ (#GotBitcoin?)

Bitcoin Outperforms Nasdaq 100, S&P 500, Grows Whopping 37% In 2019 (#GotBitcoin?)

Bitcoin Passes A Milestone 400 Million Transactions (#GotBitcoin?)

Future Returns: Why Investors May Want To Consider Bitcoin Now (#GotBitcoin?)

Next Bitcoin Core Release To Finally Connect Hardware Wallets To Full Nodes (#GotBitcoin?)

Major Crypto-Currency Exchanges Use Lloyd’s Of London, A Registered Insurance Broker (#GotBitcoin?)

How Bitcoin Can Prevent Fraud And Chargebacks (#GotBitcoin?)

Why Bitcoin’s Price Suddenly Surged Back $5K (#GotBitcoin?)

Zebpay Becomes First Exchange To Add Lightning Payments For All Users (#GotBitcoin?)

Coinbase’s New Customer Incentive: Interest Payments, With A Crypto Twist (#GotBitcoin?)

The Best Bitcoin Debit (Cashback) Cards Of 2019 (#GotBitcoin?)

Real Estate Brokerages Now Accepting Bitcoin (#GotBitcoin?)

Ernst & Young Introduces Tax Tool For Reporting Cryptocurrencies (#GotBitcoin?)

How Will Bitcoin Behave During A Recession? (#GotBitcoin?)

Investors Run Out of Options As Bitcoin, Stocks, Bonds, Oil Cave To Recession Fears (#GotBitcoin?)

Wonderful site. Lots of useful info here. I’m sending it to several pals ans additionally sharing in delicious. And obviously, thanks to your sweat!

Thanks for the compliments.

We really appreciate that.

Monty