Operating The Brain By Remote Control

Dr. William J. Tyler is an Assistant Professor in the School of Life Sciences at Arizona State University, is a co-founder and the CSO of SynSonix, Inc., and a member of the 2010 DARPA Young Faculty Award class. Operating The Brain By Remote Control

Recent advances in neurotechnology have shown that brain stimulation is capable of treating neurological diseases and brain injury, as well as serving platforms around which brain-computer interfaces can be built for various purposes.

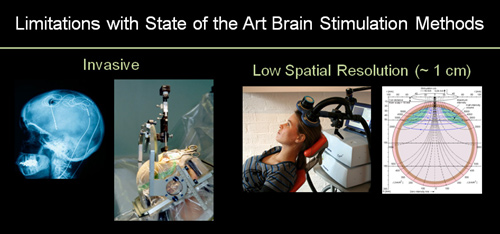

Several limitations however still pose significant challenges to implementing traditional brain stimulation methods for treating diseases and controlling information processing in brain circuits.

For example, deep-brain stimulating (DBS) electrodes used to treat movement disorders such as Parkinson’s disease require neurosurgery in order to implant electrodes and batteries into patients.

Transcranial magnetic stimulation (TMS) used to treat drug-resistant depression and other disorders do not require surgery, but have a low spatial resolution of approximately one centimeter and cannot stimulate deep brain circuits where many diseased circuits reside.

These illustrations show the surgical invasiveness of deep-brain stimulating electrodes (left) and depict the low spatial resolutions conferred by transcranial magnetic stimulation (right). (Image: Tyler Lab)

To overcome the above limitations, my laboratory has engineered a novel technology which implements transcranial pulsed ultrasound to remotely and directly stimulate brain circuits without requiring surgery. Further, we have shown this ultrasonic neuromodulation approach confers a spatial resolution approximately five times greater than TMS and can exert its effects upon subcortical brain circuits deep within the brain.

A portion of our initial work has been supported by the U.S. Army Research, Development and Engineering Command (RDECOM) Army Research Laboratory (ARL) where we have been working to develop methods for encoding sensory data onto the cortex using pulsed ultrasound.

Through a recent grant made by the Defense Advanced Research Projects Agency (DARPA) Young Faculty Award Program , our research will begin undergoing the next phases of research and development aimed towards engineering future applications using this neurotechnology for our country’s warfighters. Here, we will continue exploring the influence of ultrasound on brain function and begin using transducer phased arrays to

examine the influence of focused ultrasound on intact brain circuits. We will also be investigating the use of capacitive micromachined ultrasonic transducers (CMUTs) for use in brain stimulation. Finally, to improve upon spatial resolution, we will examine the use of acoustic metamaterials and hyperlenses to study how subdiffraction limited ultrasound influences brain wave activity patterns.

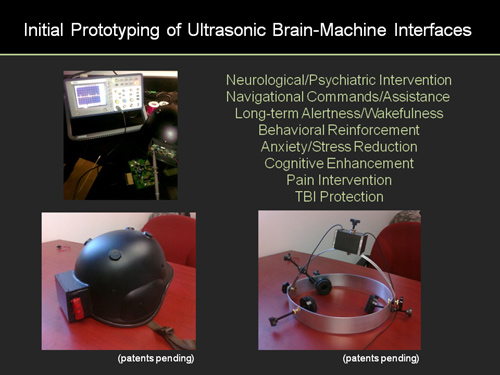

How can this technology be used to provide our nation’s Warfighters with strategic advantages? We have developed working and conceptual prototypes in which ballistic helmets can be fitted with ultrasound transducers and microcontroller devices to illustrate potential applications as shown below. We look forward to developing a close working relationship with DARPA and other Department of Defense and U.S. Intelligence Communities to bring some of these applications to fruition over the coming years depending on the most pressing needs of our country’s defense industries.

Brain-To-Brain Interfaces Have Arrived, And They Are Absolutely Mindblowing

We already have brain-computer interface systems that allow people to control cursors on a screen using the power of thought. But what about sharing thoughts between two minds? A group of neuroscientists at Harvard have found a way to do it – with a human and a rat.

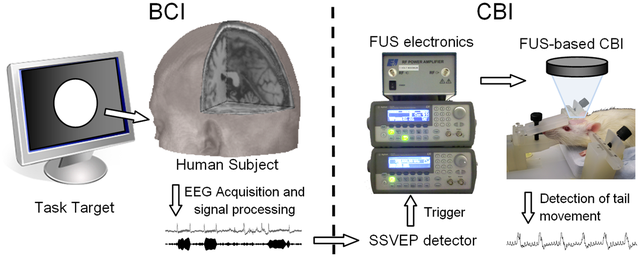

Neuroscientist Seung-Schik Yoo and colleagues wanted to show that a computer could actually send information from one brain to another. And they wanted to do it non-invasively, without sticking electrodes into anybody’s brain. Plus, they wanted to go beyond a previous experiment where one rat sent brain signals to another rat . So they developed a device that would read signals from a human brain, and feed those signals to a rat’s brain. In the end, their human subjects were able to make a rat move its tail just by thinking about it.

Here’s How They Did It:

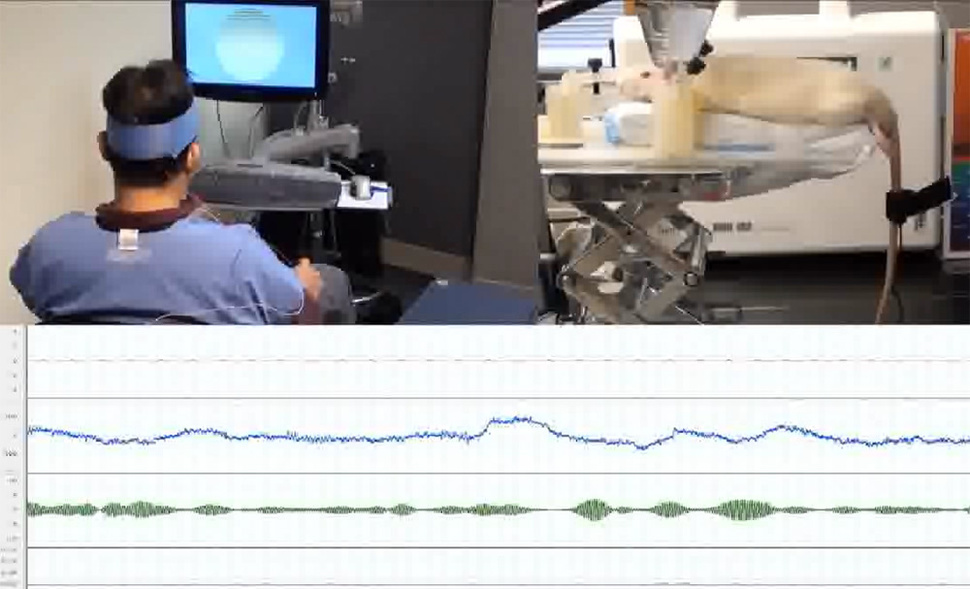

First, they put an EEG device on a human. EEG measures the brain’s electrical signals through the skull. To boost that signal strength, they had the humans look at computer monitor that was flickering at a very specific frequency. Every time the humans looked at the flickering monitor, that frequency was sent to the EEG monitor. So when the humans wanted to signal “move your tail” to the rat, they would look at the monitor and their brains would send the signal.

To control the rat, Yoo and his colleagues used focused ultrasound (FUS), which can harmlessly beam an ultrasound signal into a specific spot in the brain, exciting the neurons around it. So the rat, who was under anesthesia at the time, had its head under a FUS beam, which excited its motor cortex and caused it to twitch its tail while it slept.

The setup worked nicely. In a paper published earlier this year in PLoS One, the researchers report that the humans looked at the flickering monitor, the EEG picked up the signal, and then a computer translated it into a command sent to the rat’s brain via FUS.

Obviously this was a fairly simple experiment. The human couldn’t send compex commands to the rat, like “stand up, walk left, and open the treasure chest.” More importantly, communication was one-way. The rat couldn’t send signals to the human. But the proof of concept now exists, and the researchers believe it could lead to human-to-human brain interfaces.

They offer one example of how such a brain-to-brain interface might work. It’s known that people sometimes experience “neural coupling,” where “the neural processes of one brain are coupled to the neural processes of another brain through various environmental routes, including indirect sensory/somatomotor communication.” Experiments have shown that when people understand each other while talking, they exhibit similar patterns of activation in their brains. Yoo and colleagues wonder if their system might “augment this mutual coupling of the brains,” and “have a positive impact on human social behavior.” In other words, you might put on a device like this during couples counseling so that you can sympathize more with your spouse during arguments.

Using this device might also help you train your dog. Or, you know, it might be something you’ll be forced to wear when your boss or political leader wants you to sympathize with their agendas.

Yoo and his colleagues are well-aware of this possible dystopian application of their brain-to-brain interface, and admit as much in the final paragraph of their paper:

It is reasonable to assume that further advancements and establishment of BBI between human subjects, as well as within or across species, have the potential to trigger breaking ethical questions that cannot be satisfied by applying contemporary ethical concepts. However, it is beyond the scope of this paper to address the particular moral and philosophical issues and complex challenges, possibly even undesirable consequences that may arise with the future application of this emerging technology .

What they are saying here is that we may not even have the ethical concepts to encompass the possible uses of this technology, which sounds like something out of Ramez Naam’s novel Nexus. It also sounds incredibly disturbing. Are we opening the door to new vistas in human cruelty, or new avenues of communication between species? Hopefully our ethical development will outpace the development of this technology.

In another stunning first for neuroscience, researchers have created an electronic link between the brains of two rats, and demonstrated that signals from the mind of one can help the second solve basic puzzles in real time – even when those animals are separated by thousands of miles.

Here’s how it works. An “encoder” rat in Natal, Brazil, trained in a specific behavioral task, presses a lever in its cage it knows will earn it a reward. A brain implant records activity from the rat’s motor cortex and converts it into an electrical signal that is delivered via neural link to the brain implant of a second “decoder” rat.

Still with us? This is where things get interesting. Rat number two is in an entirely different cage. In fact, it’s in North Carolina. The second rat’s motor cortex processes the signal from rat number one and – despite being unfamiliar with the behavioral task the first rat has been conditioned to perform – uses that information to press the same lever.

The experiment, the results of which are published free of charge in today’s issue of Scientific Reports , was led by Duke neuroscientist Miguel Nicolelis, a pioneer in the field of brain-machine interfaces (BMIs). Back in 2011, Nicolelis and his colleagues unveiled the first such interface capable of a bi-directional link between a brain and a virtual body , allowing a monkey to not only mentally control a simulated arm, but receive and process sensory feedback about tactile properties like texture. Earlier this month, his team unveiled a BMI that enables rats to detect normally invisible infrared light via their sense of touch.

But an intercontinental mind-meld represents something new: a brain-to-brain interface between two live rats – one that enables realtime sharing of sensorimotor information. It’s a scientific first, and while it’s not telepathy, per se, it’s certainly something close. Neither rat was necessarily aware of the other’s existence, for example, but it’s clear that their minds were, in fact, communicating. “It’s not the Borg,” Nicolelis tells Nature’s Ed Yong. What he has created, he says, is “a new central nervous system made of two brains.”

Said nervous system is far from perfect. Untrained decoder rats receiving input from a trained encoder partner only chose the correct lever around two-thirds of the time. That’s definitely better than random odds, but still a far cry from the 95% accuracy of the encoder rats.

What this two-brain system does do, Nicolelis argues, is enable the rats to work with one another in unprecedented ways. And while neural communication between two animals on entirely separate continents is impressive in its own right*, Nicolelis says the most groundbreaking application of this technology – a 3-, 4-, or n-mind “brain net” – is still to come.

“These experiments demonstrated the ability to establish a sophisticated, direct communication linkage between rat brains,” he said in a statement, “so basically, we are creating an organic computer that solves a puzzle.”

“We cannot predict what kinds of emergent properties would appear when animals begin interacting as part of a brain-net,” he continues. “In theory, you could imagine that a combination of brains could provide solutions that individual brains cannot achieve by themselves.”

The study is published in the latest issue of Scientific Reports . (No subscription required!)

Operating The Brain By,Operating The Brain By,Operating The Brain By,Operating The Brain By,Operating The Brain By,Operating The Brain By

Related Article:

Our Brains Are Quantum Computers. Well, Maybe Not Yours (#GotBitcoin?)

Marrying Quantum Mechanics, the Human Brain, Consciousness and the Holographic Universe

The Intention Experiment The Largest Mind-Over Matter Experiment in History

Scientific Evidence for ESP A World Where There Are No More Secrets!

Quantum Computation In Brain Microtubules? The Penrose …

VW Nears Commercialization of ‘Quantum Routing’ Technique (#GotBitcoin?)

The Man Turning China Into A Quantum Superpower (#GotBitcoin?)

Leave a Reply

You must be logged in to post a comment.